During my previous career working in internal IT audit, I conducted audits over various processes and technologies, which ultimately resulted in the issuance of a report with findings and recommendations. Each finding was then assigned a risk rating (high, medium, or low), which would ultimately drive the timeline that management had to remediate.

One of the challenges I recall facing was the process used to assign the risk ratings. The only tool that we were equipped with was the subjective definitions that comprised high, medium and low risks. Since the definitions were so subjective, it was not uncommon for the business to disagree with the ratings assigned. There were even times where my own team internally would arrive at a different risk rating.

Additionally, because there were only three categories of risk (high, moderate, low), a large number of audit findings seemed to find their way into the high-risk rating bucket. These were all risks that management was unable to accept.

Further, as an auditor whose job is to focus on risk and controls, there is a tendency to focus more on controls - an unintended consequence which may cause you to lose sight of the underlying asset and whether or not it is relevant.

It wasn't untiI I decided to pivot my career from audit to a risk management position with RiskLens that I began to view risk differently. This was the point where I was introduced to the FAIR model. One of the benefits of FAIR is that it can be leveraged by audit departments to view risk through the same lens as management and the board (the financial lens) allowing for more effective and efficient conversations.

More from Rachel Slabotsky: What I Learned Leaving Internal Audit for Risk Management

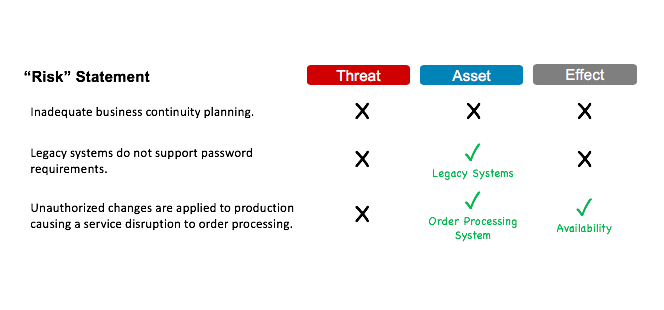

Let’s start by first providing a few examples of what, according to FAIR, are not considered to be properly defined risks:

- Inadequate business continuity planning

- Failure of critical infrastructure

- Unauthorized changes applied to production, causing a service disruption to order processing

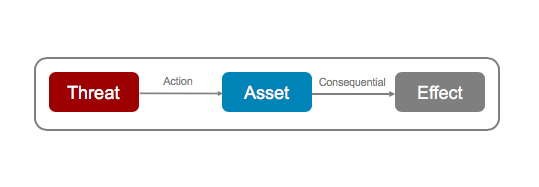

According to FAIR, a risk must contain the following elements:  An asset is defined as anything of value that can be affected in a manner that results in loss (e.g. facilities, data, systems, servers, key processes, etc.)

An asset is defined as anything of value that can be affected in a manner that results in loss (e.g. facilities, data, systems, servers, key processes, etc.)

A threat is defined as anything capable of acting against an asset in a manner that can result in loss (e.g. malicious employees, cybercriminals, an act of nature). In other words, a threat is the "acting force" that causes harm to an asset.

An effect is the characteristic of the asset that the threat seeks to impact. In cybersecurity, the effect can be classified under three main buckets: confidentiality, availability and integrity/fraud.

Applying this logic to the statements above, here is what's missing in those traditional "risk' statements:

In other words, the above statements are not by themselves risks; they represent a condition that is a constituent contributor to the risk.

Case Study: ‘High Risk’ Audit Finding Doesn't Hold Up to FAIR Analysis

In order to determine the “amount of risk”, you must first evaluate these statements in the context of a normalized risk statement. Let’s walk through our examples of faulty risk statements to demonstrate this point.

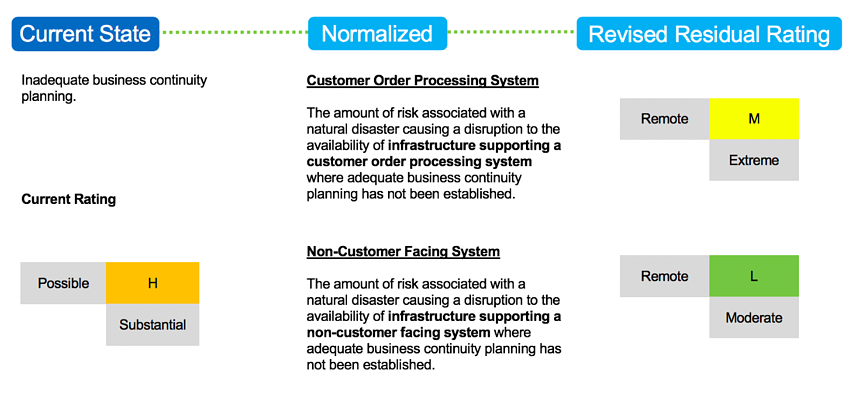

Example 1: Risk scenario is not defined (missing asset, threat, effect)

In the first example, the “risk” on the left does not contain an asset (or arguably a threat and effect). However, if we assume that the threat is an act of nature and the effect is availability, we are still left with determining the asset. As you can see from the normalized risk statements, the risk rating can change based on whether the asset is a customer order processing system vs. a non-customer facing system.

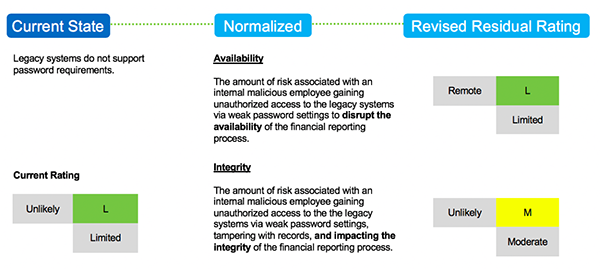

Example 2: Risk scenario is missing threat actor and loss effect

In this next example, the “risk” on the left does not include a threat actor or loss effect (e.g., confidentiality, availability, integrity). If we assume that the threat actor is a malicious employee, we are still left with determining the loss effect. As you can see from the normalized risk statements, the risk rating is more significant if the assumed impact results in a loss of integrity (e.g., tampering the records in a financial reporting process). Also, note that integrity may have a lower rating if the asset was a non-financial system.

In this next example, the “risk” on the left does not include a threat actor or loss effect (e.g., confidentiality, availability, integrity). If we assume that the threat actor is a malicious employee, we are still left with determining the loss effect. As you can see from the normalized risk statements, the risk rating is more significant if the assumed impact results in a loss of integrity (e.g., tampering the records in a financial reporting process). Also, note that integrity may have a lower rating if the asset was a non-financial system.

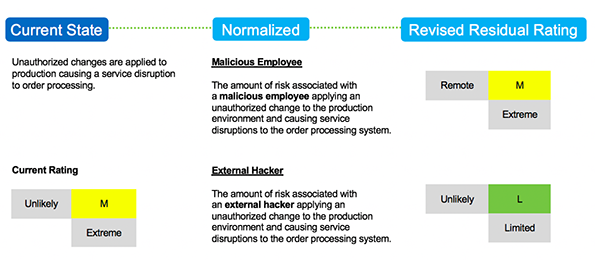

Example 3: Risk scenario is missing threat actor

In this final example, the “risk” on the left does not include a relevant threat actor (e.g., the "acting force" that causes harm to an asset). As you can see from the normalized risk statements, the threat of an external hacker maliciously applying an authorized change to production results in less potential loss exposure than in a scenario where an employee maliciously applied this change.

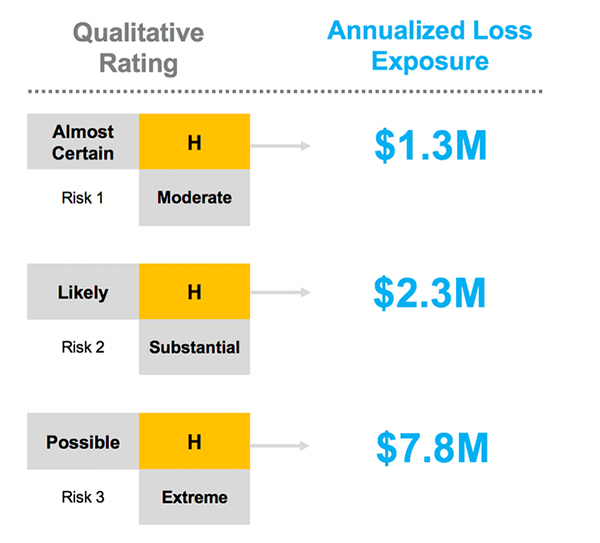

The caveat that I would like to address is that the examples above were illustrated using simple, qualitative scales. Although FAIR can be applied qualitatively, it is intended to be used as a model to quantify risk in dollars (for instance, with the RiskLens CRQ Platform). There are numerous benefits of this approach, but the one I’d like to highlight below illustrates the ability to effectively prioritize risks. For example, quantifying the three risks below using the FAIR model can help to prioritize risks that would otherwise be assigned equal ratings via a qualitative model.

Note: Annualized Loss Exposure represents the combined probable frequency and probable magnitude of the evaluated risk on an annualized basis.

In conclusion, FAIR is one way that auditors can view IT risks through the same lens as management and the board, allowing for more effective and efficient conversations. This skillset in itself is a differentiator that will only lead to better performance over time.

Learn why over 3,500 IT risk analysts and auditors have joined the FAIR Institute– and an estimated 30% of the Fortune 100 use FAIR analysis in their risk management operations.

Related: